Blog: Fortigate CVE-2023-27997 (XORtigate) in the eyes of the owl

Published on

Here is a quick explanation about the CPDoS or Cache Poisoned Denial of Service attack. The attack is detailed here: https://cpdos.org/ (and on archive.org in case the site disappears).

The cache is life

Whether it is in your microprocessors, your software or most of your websites: the cache is at the heart of performance.

When you (or rather a software) will ask the microprocessor to process data from RAM, it is "cached" in different levels of cache, the fastest being the most expensive. If there is a need to access these data again, magic, they are in the cache, no need to go and look for them in the main memory (the RAM), too slow (and even less on the hard disk).

It is the same for a web site. You make a request that will be processed by an application, slow, which will make requests to a database ... and return the content. Many sites put caches in front of applications (this is the basic business of CDN / Content Delivery Network) and, depending on the settings, if a similar request has been made recently, it is the hidden content that is returned to you without soliciting the real application / website.

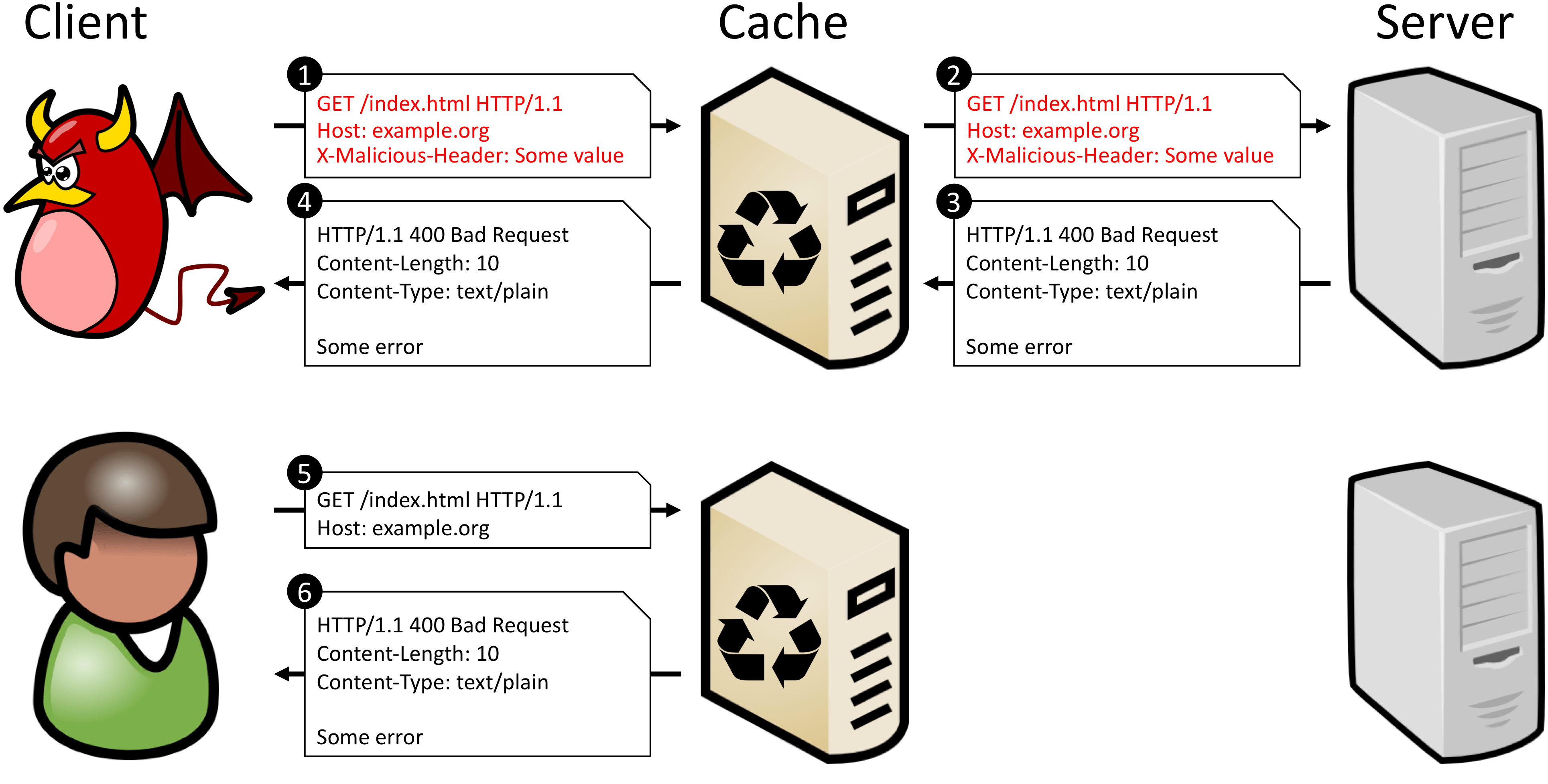

CPDoS uses several variants of the same attack: make a request through a cache service that will be forwarded to the application/website but will generate an error, which will be cached, making the application/website virtually unreachable for other clients (while the cache expires). All you have to do then is repeat the attack.

The site's schema is clear enough to not have to redraw it 😉 :

The article presents 3 variants of this attack:

The article quoted at the beginning presents a table of vulnerable technologies and CloudFront is (was) almost vulnerable to everything 😉.

A good protection against these attacks is to just exclude error pages from the cache, but with the risk that they are then used to do classic denial of service.

Alternatively, some WAFs can protect against these attacks with their protocol mismatch protection and thresholds, if placed before the cache.